Does your mobile phone provide better quality than a typical webcam? I'll test some virtual webcam software for mobile.

Peer-to-peer versus client/server

WebRTC was designed for P2P connections. But is that the fastest and most reliable Internet path? In theory, it should be. In practice, you may be heading down a dirt road in Tennessee.

WebRTC was designed to create a peer-to-peer connection or P2P. This meant my computer IP address would try and reach out directly to your IP address. The notion is that the shortest path between any two points is the best path. But is it?

[ecko_pull_quote alignment=”left” source=”engadget.com”]Google has recently touted that Hangouts will be using P2P for its voice and video sessions.[/ecko_pull_quote]

P2P though doesn’t take into account how the Internet was actually built. To understand this we have to delve into the murky world of Internet Exchange Points.

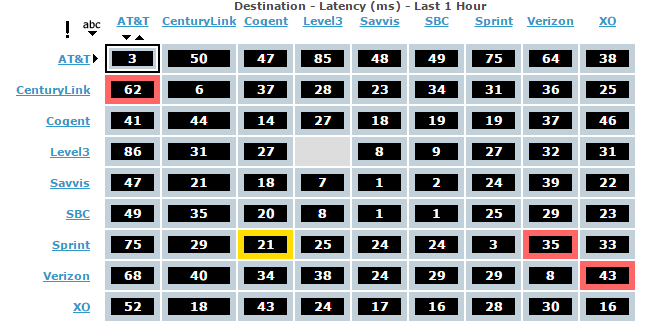

From http://internethealthreport.com/ a real time view of “ping” times between major Internet transit points in the US.

In the hippie days of the Internet, operators out of the goodness of their hearts created Internet Peer Points. These Peer Points were nothing more than a big Ethernet switch that you could plug your network into to reach another network. You only had to pay for the connection port (which usually not big money). MAE-East in Northern Virginia was one of the more famous early Internet Peer Points. As late as 1997, half of all Internet traffic went thru MAE-East.

As an ISP monitoring your traffic, you quickly figured out where your customer traffic was going to/from. For those high traffic spots, the ISP would try and get a direct connect to that particular network. The process was often informal and personal. If the admin at the other network didn’t like you they could ignore your request. Usually, a few beers and dinners helped seal deals.

With time, the process has become more formal and more about money. In 1997, Comcast would have sent a blank check to Netflix for an interconnect simple to drive cable Internet adoption. Today the tables have turned.

As an ISP, I either have users or content. I rarely have both (unless I’m one of the big transit providers). Traffic is usually not symmetrical (at least at the first point in the network). A user can send a short data request UP (send me a movie) and the DOWN response might be in gigabytes. An ISP wants to optimize these connections to reduce cost or increase speed.

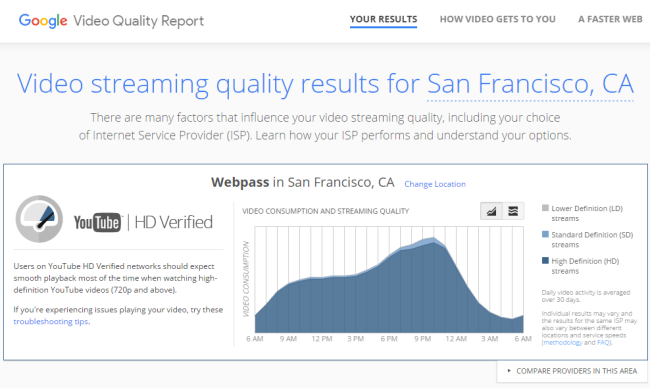

Google is front n’ center on the topic of Internet Peering. Because it’s core to you being their customer. On any given day in North America, 25% of all Internet traffic is Google related (60% of all connected devices will hit at least 1 Google service every day). If you’re ISP, you’re keen to be direct connected to Google, both to save money and provide your customers a better faster experience.

Google has a liberal and aggressive program to peer with any network of merit and today have several hundred peer points they maintain. All this to ensure we have a speedy response.

Unfortunately, the optimization of the Internet is for this client/server model and NOT for peer to peer. Because end user ISP’s don’t usually have much traffic that’s heading to another end user ISP’s (P2P), that inter-network traffic takes the digital equal of a dirt road. ISP’s don’t spend a lot of time optimizing traffic between these types of networks.

ISP’s, don’t like this type of traffic either for the simple reason is there is no win in it for them. Thus, they will often dump this lessor P2P traffic to the lowest possible cost connection partner (a catch-all route). Unfortunately, this may be just the beginning of a series of dumps you may experience as the next guy may dumps you as well.

The result if you fire up a WebRTC video session from California to Africa you’re not going to get the most reliable connection due to the number of network providers you may be sent thru. This results in great fun with random speeds, packet loss, and even periodic disconnects. Applications like BitTorrent handle this situation well, a WebRTC client, not so much.

Mobile networks are even worse. Mobile networks had no foggy idea in 1996 that your mobile phone might be trying to peer with your friend 10 feet away. As a result, your mobile Internet data is assumed to be leaving the operator’s network and is transported a long way back to a common switching center.

Things could change and should. P2P is in its infancy and we are clinging to the 1980’s client/server model.

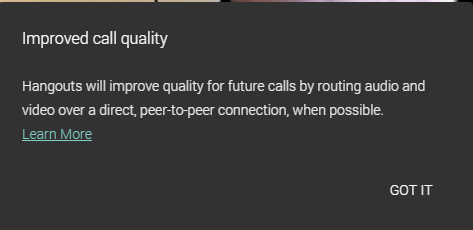

WebRTC defaults to P2P connection and not surprising Google Hangouts (which uses WebRTC) is now trying to use Peer-to-Peer. A new dialog pops up a box to let you know that the connection may be P2P. Ironically, as I captured this screen, Wireshark indicated my Hangouts connection was proxied thru a Google data center (meaning it wasn’t P2P). Big surprise my Hangouts session was with Tsahi (who happened to be at his home in Tel Aviv).

This isn’t bad, it’s good! Tsahi and my respective ISP’s are likely better optimized for connection to Google’s data center than to each other, so the fastest and most reliable path was via Google.

WebRTC, today, does not have an IF..THEN function to try and determine the best quality network path (meaning when to use TURN or when to use P2P). WebRTC doesn’t allow that connection path to change mid-session. This means you can’t revert back to a TURN server if a P2P connection starts to misbehave.

Google appears to check the network before starting a Hangouts session (this is their competitive advantage). This could be a simple ping test between the peers themselves and the TURN server to determine the short Internet path. This is outside the confines of WebRTC as a technology so as a developer you either need to consider this or not.

P2P is the best solution when all networks are equally connected, however, that’s not how the Internet works in reality and you’ll need to give this some consideration in any real time media application.